Cloud Computing Parallelism

Computing Heads for the Clouds

Today, more transistors are being produced annually than grains of rice -- and at a lower cost!The challenge for IT is figuring out what to do with all that computing power. That means harnessing parallelism.

Supercomputers exploited parallelism by using the power of mathematics to breakdown matrix-oriented problems into multiple subproblems that could be worked on simultaneously. Taking advantage of its underlying mathematical foundation, Relational DBMS vendors have long been able to decompose queries into sets of operations that could be performed in parallel running on independent processors.

It was Google, however, who most successfully has figured out how to employ high-performance parallel programming techniques to power its search engine by connecting together a million, cheap PC-like servers into what's effectively the world's largest supercomputer. The Google cloud helps ferret out answers to billions of queries in a fraction of a second.

Traditionally, supercomputers have been used mainly by research labs owned by the military, government intelligence agencies, universities and very large companies. The problems they've historically tackled have generally involved enormously complex calculations for such tasks as simulating nuclear explosions, predicting climate change, or designing airplanes.

Cloud computing aims to apply supercomputer power -- measured in the tens of trillions of computations per second -- in a way that users can tap through the Web by spreading data-processing chores across large groups of networked servers.

"Google and the Wisdom of Clouds" describes how Google, teamed with IBM, is introducing students, researchers, and entrepreneurs with the immense power of Google-style computing.

Unlike traditional supercomputers, Google's system never ages. When its individual pieces die, usually after about three years, engineers pluck them out and replace them with new, faster boxes. This means the cloud regenerates as it grows, almost like a living thing.

A move towards clouds signals a fundamental shift in how we handle information. At the most basic level, it's the computing equivalent of the evolution in electricity a century ago when farms and businesses shut down their own generators and bought power instead from efficient industrial utilities.

The software at the heart of Google computing is called "MapReduce." MapReduce delivers Google's speed and industrial heft. It divides each task into hundreds, or even thousands, of tasks, and distributes them to legions of computers. In a fraction of a second, as each one comes back with its nugget of information, MapReduce quickly assembles the responses into an answer.

There's an open-source version of the MapReduce architecture of cloud computing called "Hadoop." The team that developed Hadoop belonged to a company, Nutch, that got acquired. Oddly, they are now working within the walls of Yahoo, which was counting on the MapReduce offspring to give its own computers a touch of Google magic. Hadoop, though, remains open source.

What will computing clouds look like? They'll function as huge virtual laboratories "curating" troves of data. All sorts of business models are sure to evolve. Google's CEO, Eric Schmidt, likes to compare the cloud-based supercomputer data centers to the prohibitively expensive particle accelerators known as cyclotrons. "There are only a few cyclotrons in physics," he says. "And every one if them is important, because if you're a top-flight physicist you need to be at the lab where that cyclotron is being run. That's where history's going to be made; that's where the inventions are going to come." As Mark Dean, head of IBM's research operation in Almaden, Calif., says, in the future using these new cloud computing labs, "you may win the Nobel prize by analyzing data assembled by someone else."

As Google, IBM, Microsoft, Yahoo!, and Amazon lead the world in building massive cloud computing data centers with massively parallel processing capabilities, the only constraints may be finding enough electricity to power their truly amazing infrastructures.

The new border would run from southwest to northeast roughly through Baghdad's airport.

The new border would run from southwest to northeast roughly through Baghdad's airport.

In 1979, futurist Alvin Toffler coined the term "prosumer" to describe the open source-like phenomenon of people producing what they consume. The term applies to individuals who prefer to be involved in designing the things they purchase. In other words, new products and/or services are created by combining together the roles of producer and consumer.

In 1979, futurist Alvin Toffler coined the term "prosumer" to describe the open source-like phenomenon of people producing what they consume. The term applies to individuals who prefer to be involved in designing the things they purchase. In other words, new products and/or services are created by combining together the roles of producer and consumer.  Perhaps because George W. Bush was once part owner of the Texas Rangers baseball team, it's fitting that his presidential administration's legacy will most likely be remembered by the baseball metaphor Three Strikes and You're Out.

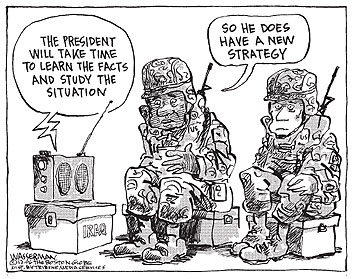

Perhaps because George W. Bush was once part owner of the Texas Rangers baseball team, it's fitting that his presidential administration's legacy will most likely be remembered by the baseball metaphor Three Strikes and You're Out. The bipartisan Iraq Study Group delivered, in stark terms, a broad indictment that U.S. policy in Iraq is not working. The panel, headed by former secretary of state Jim Baker and former Indiana congressman Lee Hamilton, describes our situation there as "grave and deteriorating."

The bipartisan Iraq Study Group delivered, in stark terms, a broad indictment that U.S. policy in Iraq is not working. The panel, headed by former secretary of state Jim Baker and former Indiana congressman Lee Hamilton, describes our situation there as "grave and deteriorating."

Renowned author and high technology futurist George Gilder has developed what he describes as

Renowned author and high technology futurist George Gilder has developed what he describes as  Wired Magazine recently published a fascinating article written by George Gilder called

Wired Magazine recently published a fascinating article written by George Gilder called  that most Americans have never been asked to sacrifice anything. The burden of national defense should be been borne by everyone -- not just a very small few.

that most Americans have never been asked to sacrifice anything. The burden of national defense should be been borne by everyone -- not just a very small few. With the election now over and the Democrats getting ready to take control of both the House and Senate in the next Congress, let's hope they don't overreach. That would be the natural tendency for a party that's long been out of power. Congress shouldn't try to control America's foreign policy no matter how much they may want to. That's the job of the President. Congress must not engage in impeachment hearings or assigning partisan blame for past mistakes. That would be a total waste of time and energy.

With the election now over and the Democrats getting ready to take control of both the House and Senate in the next Congress, let's hope they don't overreach. That would be the natural tendency for a party that's long been out of power. Congress shouldn't try to control America's foreign policy no matter how much they may want to. That's the job of the President. Congress must not engage in impeachment hearings or assigning partisan blame for past mistakes. That would be a total waste of time and energy.  We didn't go to war in Iraq because of Weapons of Mass Destruction (WMDs). There weren't any.

We didn't go to war in Iraq because of Weapons of Mass Destruction (WMDs). There weren't any.

In "The Dark Side," FRONTLINE tells the story of the vice president's role as the chief architect of the war on terror, and his battle with Director of Central Intelligence George Tenet for control of the "dark side."

In "The Dark Side," FRONTLINE tells the story of the vice president's role as the chief architect of the war on terror, and his battle with Director of Central Intelligence George Tenet for control of the "dark side."

As the late, charismatic astronomer and writer Carl Sagan said, referring to the picture of the Earth presented to the right:

As the late, charismatic astronomer and writer Carl Sagan said, referring to the picture of the Earth presented to the right:

The Senate voted 57-41, three votes short of advancing the bill, to reject a Republican effort to slash taxes on inherited estates. This vote preserves the estate tax, for now. The estate tax is currently paid only by those who inherit more than $2 million. According to the most recent statistics available from the Internal Revenue Service, 1.17 percent of people who died in 2002 left a taxable estate. Senate Majority Leader Bill Frist, R-Tenn, says the "death tax is unfair."

The Senate voted 57-41, three votes short of advancing the bill, to reject a Republican effort to slash taxes on inherited estates. This vote preserves the estate tax, for now. The estate tax is currently paid only by those who inherit more than $2 million. According to the most recent statistics available from the Internal Revenue Service, 1.17 percent of people who died in 2002 left a taxable estate. Senate Majority Leader Bill Frist, R-Tenn, says the "death tax is unfair."

When it comes to enterprise IT, there's a continuum that ranges between strategic and support. The telltale indicator is to look at where the CIO reports. Does he or she report directly to the CEO, or does the CIO report to the CFO?

When it comes to enterprise IT, there's a continuum that ranges between strategic and support. The telltale indicator is to look at where the CIO reports. Does he or she report directly to the CEO, or does the CIO report to the CFO?  If it's the latter, then IT's role is perceived as one of providing support. In this case, when business leaders think IT, they invariably think cost center. With a support IT organization, the most important objective is reducing IT operational and maintenance costs. Beware that EA groups are themselves frequently considered cost-overhead and as such are subject to cost-cutting purges. Generally, the best strategy for cutting costs is to focus initial EA initiatives on consolidation.

If it's the latter, then IT's role is perceived as one of providing support. In this case, when business leaders think IT, they invariably think cost center. With a support IT organization, the most important objective is reducing IT operational and maintenance costs. Beware that EA groups are themselves frequently considered cost-overhead and as such are subject to cost-cutting purges. Generally, the best strategy for cutting costs is to focus initial EA initiatives on consolidation.  America is addicted to oil and that addiction is killing us -- fiscally, environmentally, and spiritually.

America is addicted to oil and that addiction is killing us -- fiscally, environmentally, and spiritually.

Site Feed

Site Feed